Overview

High-level architecture overview of Archestra Platform components

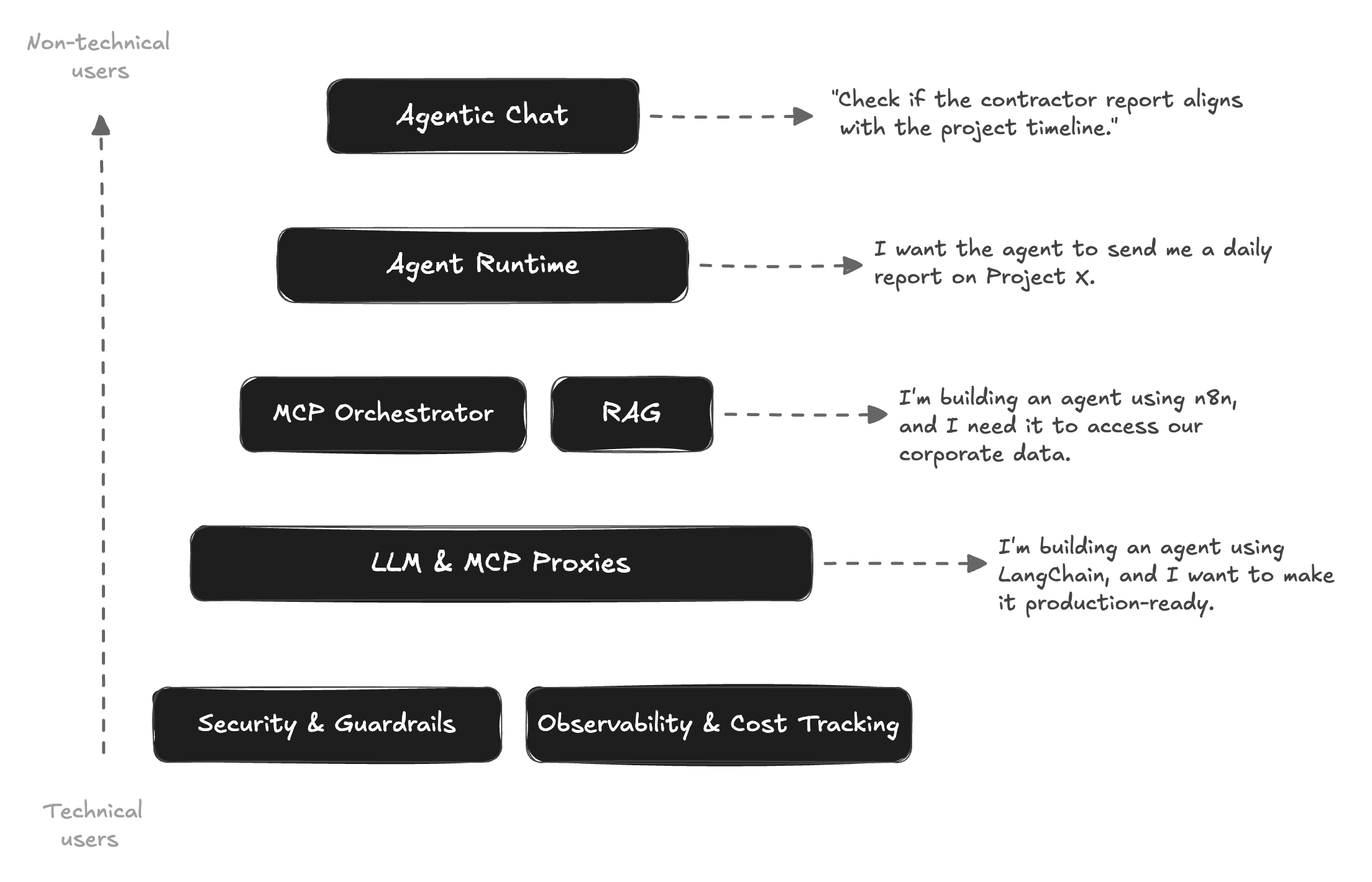

The Full AI Stack for Everyone

Archestra is a centralized AI Platform designed for organizations where software engineers, and non-technical teams all need to work with AI agents. While a non-technical user may enjoy simple ChatGPT-like UI and get immediate results, a technical user may build agents using LangChain, N8N, pure Python or other stack of choice leveraging MCP orchestrator, guardrails and observability. Archestra will reduce friction and increase AI adoption in all cases.

Fun fact: The team behind Archestra.AI previously worked on Grafana OnCall.

Composable Components

Archestra is built as a set of composable components. Most organizations already have tools like n8n, LiteLLM, Grafana, or custom MCP servers in their infrastructure. You can adopt all of Archestra, a few components, or even just one — it integrates with what you already have. We're building an open composable platform and not willing to lock you.

Agentic Chat — ChatGPT-like interface for non-technical users. Talk to agents via web UI, Slack, MS Teams, or Email.

Agent Runtime — No-code builder for autonomous agents. Define system prompts, assign MCP tools and sub-agents, configure triggers.

MCP Orchestrator — Run MCP servers as isolated pods in Kubernetes.

Knowledge Base — Built-in RAG Knowledge Base to give your agents access to your data.

LLM & MCP Proxies — Drop-in proxy between your apps and LLM providers. MCP Gateway provides a single endpoint for all MCP tools. Works with any framework: n8n, LangChain, Vercel AI, Pydantic AI, Mastra.

Security & Guardrails and Observability — Deterministic tool invocation policies and trusted data policies that cannot be bypassed by prompt injection. Prometheus metrics, OpenTelemetry tracing, and per-team cost tracking.

See Pricing Model for licensing details.